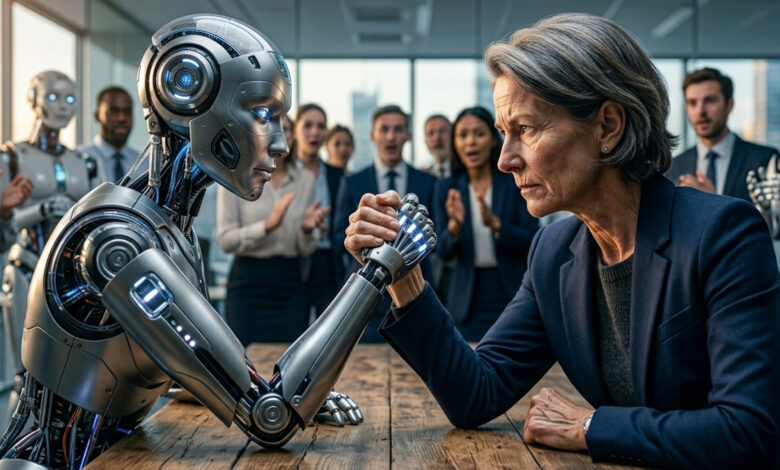

The question of whether humans are still needed in the age of AI seems important. But it is wrong because it presupposes that people are evaluated primarily according to their usability. Whether he is still productive enough and whether he can keep up. This is an image of humanity that reveals more about our work culture than it does about AI. A personal confrontation.

Are humans still needed in the age of artificial intelligence? Actually, this question seems relevant and important.

At second glance, however, she seems strangely defensive. I have the feeling more and more as if humans now have to prove that they can still be used alongside AI systems. I think that’s nonsense.

Human or AI: We are asking the wrong question

What particularly bothers me about the contributions on this topic is that they always sound as if they want to reassure. But they often sound surprisingly insecure. It is then explained with great arguments that people are still more creative, have empathy, take responsibility and create meaning.

Of course this is all true. But it also sounds a bit as if people have to write an application about their own remaining skills so that they are not completely removed from processes in the age of AI.

I think this attitude is wrong, and not because AI wouldn’t change anything. Quite the opposite. AI is already changing work, communication, education, administration, business models and decisions. But the question of whether we “still need” people is, in my opinion, already being asked incorrectly.

People are more than their productivity

Of course, you can – and must – talk about which activities will be automated in the future. You can and should also ask which professions are changing, which skills are becoming more important and where people need to reorient themselves.

In my opinion, all of this is part of a serious debate about AI (and is also something I encourage in my lectures at the “Night of Speakers”, for example). But the question of whether people are still needed at all is something different.

It assumes that people are evaluated primarily based on whether they still fulfill a useful function in a system, whether they are still productive enough and whether they can still “keep up.”

I could put it in other words: In my opinion, the question of whether people are still needed basically revolves around whether they still provide an output that a machine cannot produce faster, cheaper or in larger quantities.

But that is exactly where the problem lies. Humans are reduced to usability. In doing so, we really – and unnecessarily – make people smaller.

Human vs. AI: Who is the machine?

Unfortunately, this way of thinking about people is not new at all. And in particular, this view was not invented by AI or through the advent of AI. AI just makes it more visible.

Long before ChatGPT, people in many organizations were treated like systems that should function as smoothly as possible. This can be seen in many places: work has been broken down into key figures, communication has been squeezed into processes, creativity has been translated into results and productivity has been confused with meaning.

It’s like that in many companies: Many employees spend their days transforming information, preparing votes, writing emails or creating minutes.

If AI takes over some of these tasks (which AI can do really well), then that threatens human activity in a certain way, no question. But it also reveals how uniform, how machine-like, some human work has long since become.

AI is changing the way we look at human performance

I therefore believe that AI does not devalue humans. But it draws the veil away from activities that we have long considered particularly human. A well-formulated standard text is not automatically an expression of deep knowledge. A neat summary is not necessarily evidence of judgment, and a comprehensive presentation is not a strategy.

AI can now carry out many of the above-mentioned activities with impressive quality, and I don’t want to downplay that at all. But in my opinion it doesn’t follow that people become less important.

Rather, it means that we need to look more closely and decide what is mere production of text, structure and suggestion and where actual human achievement begins.

The task of people

In my opinion, human performance begins where it is not just about output, but about classification, responsibility, standards and ultimately consequences. I could put it another way: it’s about what’s right, not just what’s possible.

Two examples show what I mean:

- AI can formulate a termination more politely or more precisely. But she cannot decide whether a company wants to treat people in this way.

- AI can provide arguments regarding a specific topic or situation. But she can’t take responsibility for it herself.

This is precisely why it is not enough to simply defend humans as more empathetic or creative “machines”. Humans are not better AI because they are not AI and nothing comparable to AI. He is something else.

Human or AI? Responsibility is not a process step

I see this particularly when the topic of “responsibility” is discussed. Many AI debates point out that humans must continue to be involved in decisions, i.e. “in the loop”. That sounds reasonable. But it’s not enough if you only understand it technically.

People are important not because they click “release” at the end. Then he would only have one role in the process, in this case that of release. But responsibility means more.

Responsibility means being addressable. Someone who has responsibility can be asked. Someone who is responsible has to explain themselves when asked and you can contradict him or her. Someone who bears responsibility ultimately has to live with the consequences. An AI doesn’t have to and won’t.

The more decisions are prepared, influenced or accelerated by AI, the more important the question of who is actually responsible for these decisions, and in real terms, becomes.

In my opinion, the most comfortable sentence in the coming years will be: “The AI recommended it.” It sounds as if it was preceded by a deep preoccupation with the situation or the topic at hand. However, I fear that this sentence will be more of an elegant form of self-exoneration.

If the AI has recommended it, you may no longer need to explain in detail why something is being done. And somehow it’s not just a decision made by one person alone.

We don’t need pity

I am frustrated by texts that almost pityingly want to save people in the age of AI. Because in my opinion they completely miss the actual topic. People undoubtedly remain important.

Its importance does not depend on whether it is somewhere better, warmer or more creative than a machine. If we only evaluate people based on what they do in a process, then AI actually becomes a threat. This shows exactly how small we think of people.

People don’t need a eulogy for their supposedly ultimate strengths. Rather, he needs a society that doesn’t only take him seriously when he can prove that he cannot yet be automated. And he needs an image of humanity that knows more than productivity and efficiency.

So the question is not whether we still need humans in the age of AI. Rather, we should ask: What kind of human do we want to remain in a world where machines are providing more and more answers?

You could also put it another way: AI forces us to ask anew what we actually want to use human intelligence for: for more text and speed? Or for better decisions and more meaningful work?

Also interesting: