We previously discussed why a business would want to develop an enterprise MCP strategy. As we know, Model Context Protocol provides the standard for agent-to-system communication. But the real challenge is implementing it at enterprise scale without compromising security, governance, or the existing architecture. Now it’s time to get practical.

Considerations for an enterprise MCP strategy

Let’s go through the specific considerations and a phased roadmap you need to build enterprise-ready MCP infrastructure. You can move from experimentation to production without completely rebuilding your existing infrastructure.Whether you’re just exploring agentic AI or already running pilots with existing MCP servers, this guide will help you build an MCP strategy that scales.

1. Centralized registry

Publish MCP servers to a central catalog so every authorized agent and developer in your organization can discover and reuse validated business capabilities.

This prevents teams from building duplicate tools for the same business functions. It also provides a single source of truth. You can review, version, and deprecate tools centrally rather than hunting across disconnected implementations.

2. Security and governance

MCP servers must implement the same security standards as any API endpoint. This means authentication (OAuth, JWT, or integration with your existing identity provider), authorization that enforces the same rules you apply to human users, and validation of every agent request.

While that’s easy to say, in practice, we acknowledge that enterprise landscapes are rarely homogeneous. APIs and systems (and now MCP servers and AI agents) are owned by different groups, operate under different constraints, and support a wide variety of consumers. This continues the governance challenge that enterprises faced for the past couple of decades with a new twist: How do you expose capabilities to agents without creating security gaps or losing control?

One option is to instantiate the MCP server at the gateway layer where enterprises already manage traffic, security, and policy. By doing so, you route traffic flows through the same battle-tested gateway as existing API traffic. Policies apply consistently. Routing is handled declaratively. And, with the addition of many vendors, including MuleSoft, adding MCP specific policies, you build on top of your existing infrastructure instead of starting from scratch.

This approach provides several critical advantages:

- Explicit control: Enforce an Attribute-based Access Control Policy (ABAC) to choose exactly which MCP tools become available agentically, enforcing zero-trust practices.

- Unified governance: By directing the traffic to various MCP servers through a centralized gateway, IT admins can ensure proper policies are applied across the enterprise.

- Decoupled architecture: Decoupling the MCP server from the client future-proofs the system. This separation allows you to swap out the MCP server implementation or the entire underlying backend without disrupting the AI application.

Unlike approaches that treat MCP enablement as a developer-side concern or even a completely new territory, this aligns AI adoption with the groups who already govern access and understand enterprise constraints: IT, security, and developer teams.

3. Cost control and observability

An enterprise approach isn’t just about security. It’s also about financial sustainability. When you manage MCP at the platform level, you solve two major cost drivers:

- Eliminating integration redundancy: Without a central strategy, teams often build redundant MCP servers to the same systems. An enterprise approach encourages tool reuse. For example, one team can build a “Refund Process” MCP server once and make it visible for reuse to teams across the company.

- Usage visibility: By routing MCP traffic through a central gateway layer, you gain immediate visibility into tool usage. You can see which agents are calling which systems, monitor for “runaway” AI behaviors that spike costs, and optimize your backend resources based on actual demand.

4. Tools created for processes not single tasks

There are often two approaches to building tools, direct API endpoint mirroring or a task/process oriented tool. Let’s take a closer look at both approaches.

Anti-pattern: direct API mirroring:

- get-orders-id

- post-orders

- put-orders-id

- delete-orders-idWhen each tool maps 1:1 to an API endpoint, the AI agent must fully understand your entire API design to accomplish any task. If there are too many endpoints, the agent becomes unable to effectively or efficiently figure out which ones to use for the task at hand.

Recommended approach: task-oriented tools:

- get_order_summary: Combines order data, customer information, and shipment status

- update_order_address: Handles validation and updates across related records

- cancel_order: Manages refunds, inventory adjustments, and notifications

The MCP layer orchestrates multiple API calls when agents need related information together. It adds business logic that makes sense for agent workflows, even if that logic doesn’t exist in underlying APIs.

This approach reduces the cognitive load on agents, makes tools more discoverable, and isolates agents from backend complexity. When your APIs change, you update the MCP server layer without touching agent implementations. Innovation moves forward without leaving stability behind.

5. Tailored metadata for agents

Agents choose tools based on descriptions and parameter schemas. This means that your traditional API documentation is not ideal for an AI agent. And, poor documentation means agents won’t use your tools as expected, or at all.

Each tool requires a clear, concise description of what it accomplishes (not what it is) and various examples to understand and decide how and which tool to use. Parameter descriptions should explain expected formats and constraints with defaults, if possible.

For example, compare the following two descriptions:

- Weak: “Get order details.”

- Strong: “Retrieve comprehensive order details including items, pricing, shipping status, and customer information. Use this when you need complete order context for customer service inquiries.”

The first one is a typical description of an API endpoint written for systems and developers.

The second description tells the AI agents what action it can take with this tool and when to use it.

The following table describes considerations on what you need in your API documentation vs. an MCP server metadata:

| API documentation | MCP server metadata | |

|---|---|---|

| Primary audience | Developers and systems | Large language models (LLMs) |

| Purpose | To document and validate how to make an API endpoint call | To describe action and execution |

| Description | Technical (e.g. “String, UUID”) | Natural language (e.g. “Use this if the user asks where their package is”) |

Implementation strategy: A phased approach

Organizations mature at different rates. The path to enterprise-ready MCP server infrastructure follows three distinct phases:

- Map existing APIs to business processes

- Centralized infrastructure and governance

- Multi-step workflows and orchestration

Let’s dive into each phase.

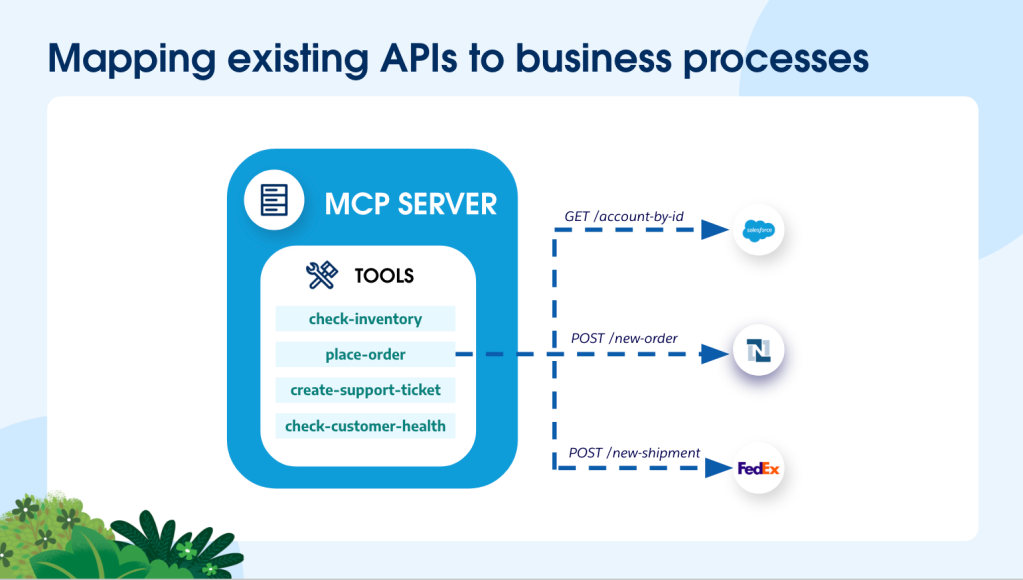

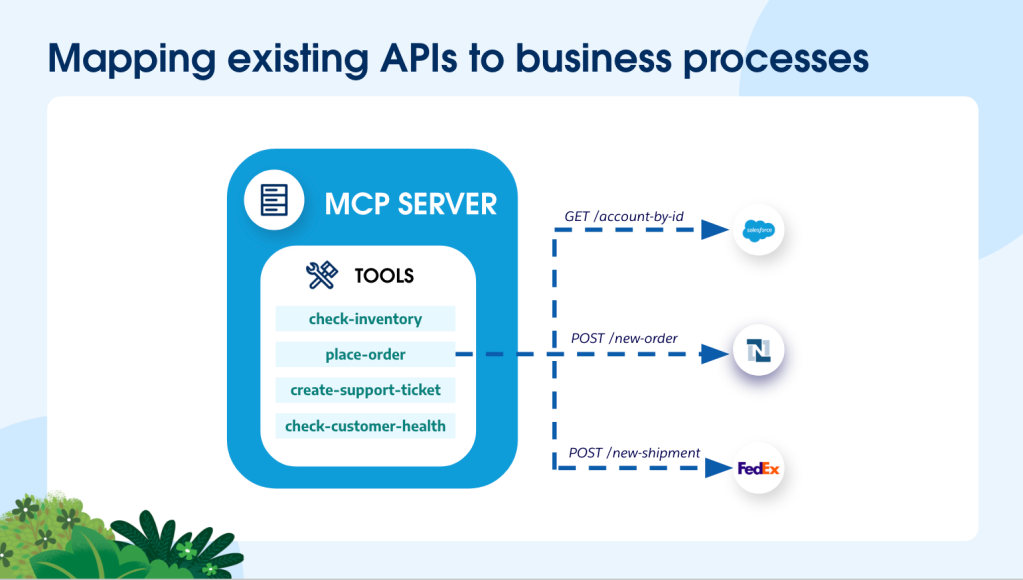

Phase 1: Map existing APIs to business processes

Start by identifying a business process you want agents to handle, then determine how to expose your existing APIs as MCP tools that support that process.

- Example scenario: A customer requests replacement for a defective order.

- Your existing infrastructure: You already have a CRM API, a shipping API, a billing API, and more. These work well for their current consumers, but agents need a different interface.

- Enterprise MCP strategy approach: Create an MCP server called “Account Service” with a “place-replacement-order” tool that orchestrates calls to these existing APIs. When the customer asks the agent to replace the defective item, the agent can use this single tool to place an order and provide the customer with order and shipping notification.

The MCP server doesn’t replace your APIs – it provides a task-oriented interface on top of them. It handles the orchestration (calling multiple APIs in sequence), applies business logic, and returns results in a format agents can work with.

This phase teaches you how agents formulate requests and which information or API combinations are actually useful. You’ll discover which tools get heavy usage and which get ignored; valuable insights that you need for Phase 2.

Phase 2: Establish and scale governance

Register and govern MCP servers through a centralized control plane, regardless of where they’re deployed.

- Example scenario: Account Service MCP server now needs to be available across multiple support teams (tier 1 support, technical support, billing support) with different access levels and compliance requirements.

- Enterprise MCP strategy approach: Different teams may deploy their MCP servers in different environments – some in AWS, others in Azure, some on-premises. The key is registering all of them in a central control plane where they can be discovered, secured, and governed consistently.

Teams maintain the flexibility to deploy where it makes sense for their infrastructure and innovation speed. Platform and security teams maintain visibility and control through centralized governance. Marketing’s agent and Engineering’s agent can both discover and use the Account Service MCP server, with permissions enforced consistently.

The MCP servers still orchestrate your existing APIs—now with enterprise-wide governance applied at the control plane layer, not at individual deployment points.

This phase establishes the governance foundation for scale: centralized discovery, authentication, audit trails, and policy enforcement across a distributed deployment model. You’re building the infrastructure that will support hundreds of agent workflows while maintaining security and compliance without sacrificing deployment flexibility.

Phase 3: Enable multi-server orchestration

Allow agents to discover and use tools across multiple MCP servers to complete complex business processes.

- Example scenario: When a customer calls to report a defective product, the resolution process requires coordination across customer service, inventory management, and logistics—each potentially managed by different teams with their own MCP servers.

- Enterprise MCP strategy approach: Configure agents to access multiple MCP servers through your central control plane. When a customer reports a defect, the agent discovers and uses tools from different servers:

- Call “validate_warranty_status” from the account service server

- Execute “process_refund” from the finance server

- Call “check_replacement_inventory” from the inventory server

- Execute “create_shipment_order” from the logistics server

- Call “update_customer_record” from the account service server

- Trigger “send_confirmation_notification” from the account service server

- Trigger “update_customer_health” to keep track of customer satisfaction from the account service server

The agent doesn’t need to know which team built which server or where they’re deployed. It discovers available tools and the control plane handles authentication, authorization, and routing to the appropriate server.

Each MCP server continues to orchestrate APIs under its purview. The agent orchestrates across MCP servers to complete end-to-end workflows. Teams maintain ownership of their domains while agents can compose capabilities from multiple sources.

This phase demonstrates the value of your governance foundation from Phase 2. Without centralized discovery and consistent security, agents couldn’t safely access tools across organizational boundaries. With it, you enable true cross-functional automation while maintaining proper access controls and audit trails.

The pragmatic path to enterprise agentic AI

An enterprise MCP strategy isn’t about perfect implementation on day one. It’s about creating a sustainable path that allows your developers to innovate while IT has the visibility to govern and secure your assets.

Start with Phase 1. Identify a real business process. Map your existing APIs to support it. Learn how agents interact with your systems through MCP servers. Move to Phase 2 when you’re ready to scale. Establish centralized governance. Implement gateway-based security. Build the infrastructure that will support hundreds of agent workflows. Advance to Phase 3 when complexity demands it. Enable cross-functional automation by orchestrating tools across multiple MCP servers while maintaining proper access controls.

The enterprises that succeed with agentic AI won’t be those that move fastest or invest most heavily. They’ll be the ones that treat MCP as an interfacing layer and take advantage of the APIs they already have.

Ready to put these principles into practice?

Learn how to make your existing APIs agent-ready with ui-based MCP Creator and code-based Anypoint Code Builder with this step-by-step guide. You can also follow along with an interactive demo. Check out MCP: Bringing Standardization to the AI Integration Landscape to learn the basics of MCP.